Table of Contents

Planning Multi-Fingered Grasps as Probabilistic Inference in a Learned Deep Network

Abstract

We propose a novel approach to multi-fingered grasp planning leveraging learned deep neural network models. We train a convolutional neural network to predict grasp success as a function of both visual information of an object and grasp configuration. We can then formulate grasp planning as inferring the grasp configuration which maximizes the probability of grasp success. We efficiently perform this inference using a gradient-ascent optimization inside the neural network using the backpropagation algorithm. Our work is the first to directly plan high quality multi-fingered grasps in configuration space using a deep neural network without the need of an external planner. We validate our inference method performing both multi-finger and two-finger grasps on real robots. Our experimental results show that our planning method outperforms existing planning methods for neural networks; while offering several other benefits including being data-efficient in learning and fast enough to be deployed in real robotic applications.

Publication

- "Planning Multi-Fingered Grasps as Probabilistic Inference in a Learned Deep Network", Qingkai Lu, Kautilya Chenna, Balakumar Sundaralingam, and Tucker Hermans, International Symposium on Robotics Research (ISRR) 2017.

Here is the corresponding bibtex entry:

@inproceedings{lu2017grasp,

title={Planning Multi-Fingered Grasps as Probabilistic Inference in a Learned Deep Network},

author={Lu, Qingkai and Chenna, Kautilya and Sundaralingam, Balakumar and Hermans, Tucker},

booktitle={Int’l Symp. on Robotics Research},

year={2017}

}

Grasp Performance Video

Experiments

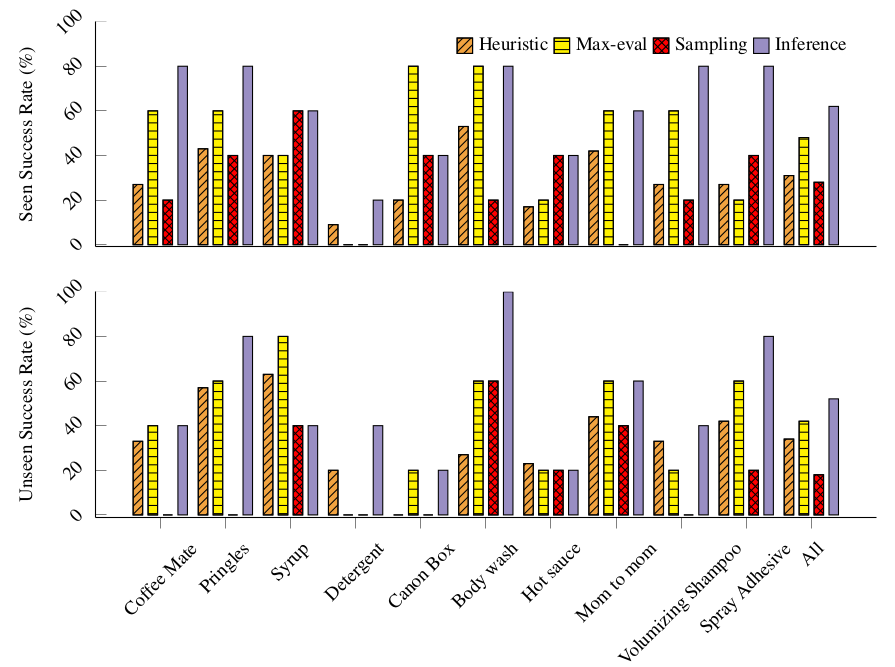

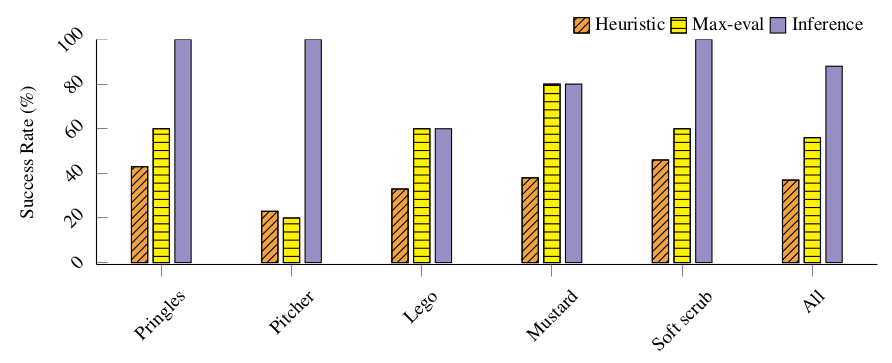

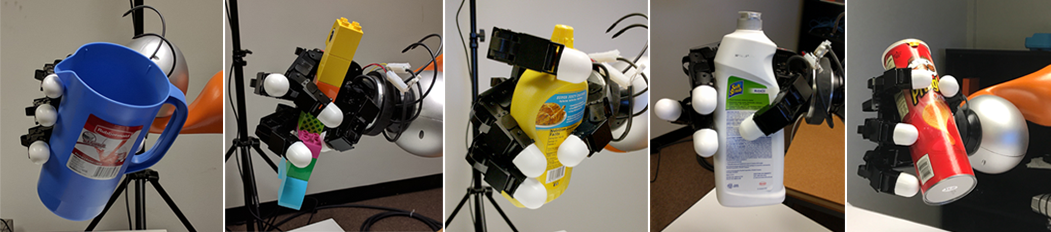

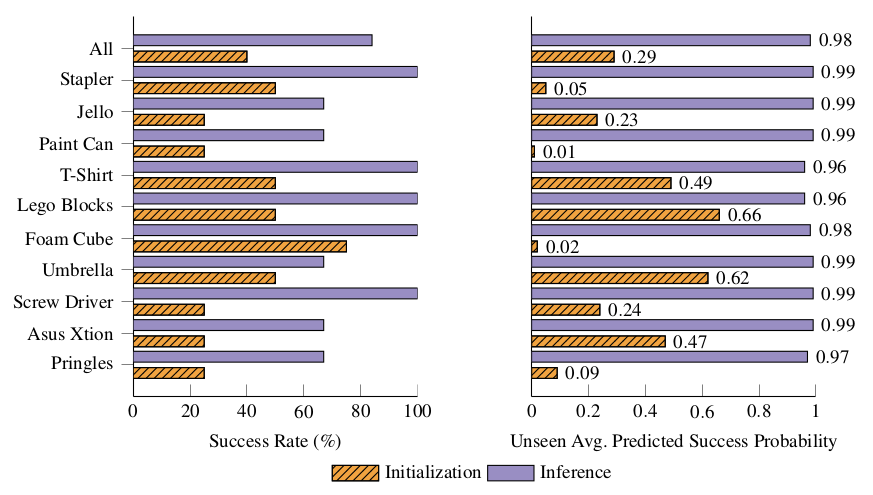

We conduct multi-finger grasp experiments using the same four-fingered Allegro hand mounted on a Kuka LBR4 arm in simulation and on the real robot. We compare to a heuristic grasp procedure, as well as the two dominant approaches to deep-learning based grasp planning: sampling and regression. Finally, we show the applicability of our inference method to two-finger grippers by preforming real-world experiments on the Baxter robot.

Multi-finger Grasp Inference in Simulation

Real Robot Multi-finger Grasp Inference

Real Robot Two-finger Gripper Grasp Inference

Source Code & Data

Source code:

Multi-finger grasp learning and inference

Git tag: isrr_2017 under branch grasp_sim_qingkai

Git tag: isrr_2017 under branch qingkai_dev

Git tag: isrr_2017 under branch stiffness_controller

Two-fingered Learning and Inference

Baxter Two-fingered Grasp Pipeline

Data: